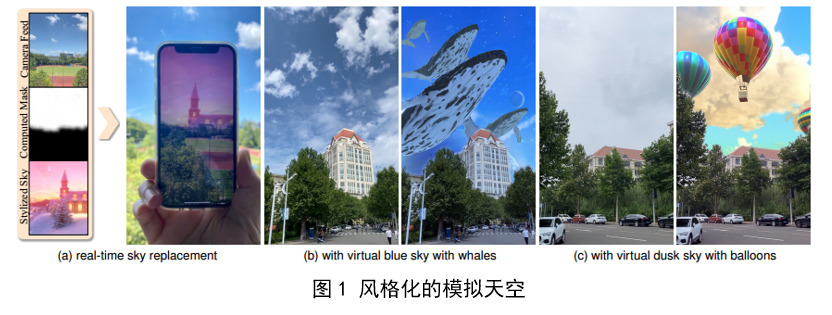

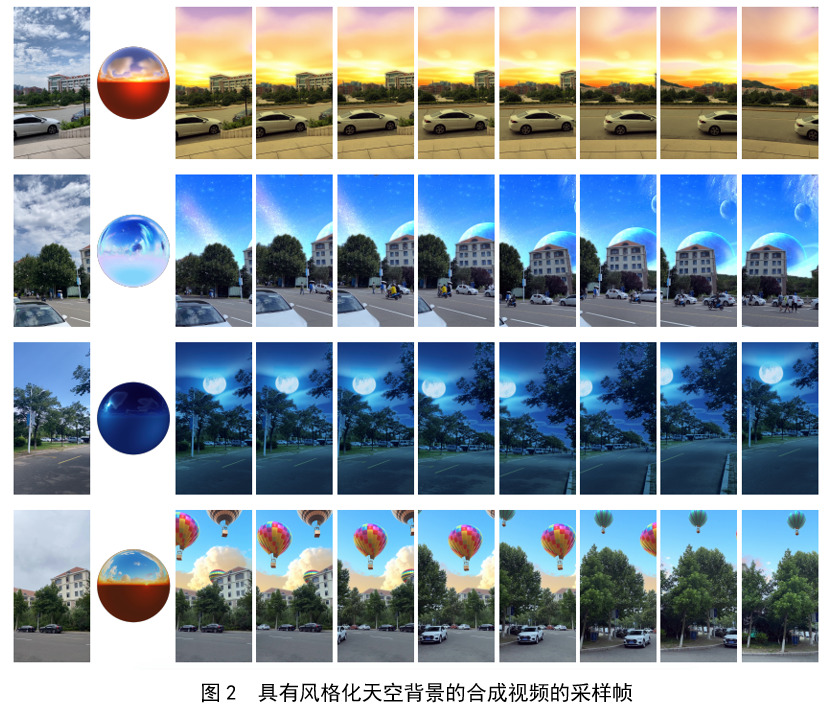

The development of AR technology has blurred the boundary between real and virtual worlds. The sky is ubiquitous in the daily environment and its simulation is an important component in AR technology, and the real-time turnover of the sky is a big challenge.

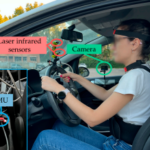

MobileSky uses an automatic sky replacement method for mobile AR experiences for the first time. By using camera color frames and IMU data as input, a near-realistic sky effect is produced, running at approximately 30 FPS (Hz).

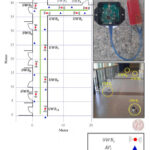

The main challenge in the R&D process was to quickly and accurately extract the sky regions from the camera feed. The developers built a novel framework combining deep semantic networks with post-processing refinement steps in order to provide higher quality, spatially and temporally consistent sky mask maps to camera frames in real time. And using IMU data, new sky-aware constraints, such as temporal consistency (using frame-wise correlated IMU data to align the previous frame with the current frame), position consistency, and color consistency, are proposed to help refine the weakly classified part of the segmentation output.